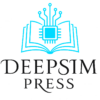

PySpark on Windows: Three Errors Every Data Scientist Hits

HADOOP_HOME is unset, pyspark.pandas won’t import, and that index warning — all solved in one place

If you have ever tried to run PySpark on a Windows laptop, you have almost certainly seen at least one of these:

java.io.FileNotFoundException: HADOOP_HOME and hadoop.home.dir are unset.

ImportError: cannot import name '_builtin_table' from 'pandas.core.common'

PandasAPIOnSparkAdviceWarning: If `index_col` is not specified for `read_parquet`,

the default index is attached which can cause additional overhead.

None of these errors means your Spark installation is broken. Each one has a specific, fixable cause. This article walks through all three — what causes them, which fix applies to your situation, and the exact setup cell that prevents all of them from appearing in the first place.

The Environment This Applies To

- OS: Windows 10 / 11

- PySpark: 4.x (tested on 4.1.1)

- Python: 3.10–3.12

- Pandas: 2.2.x (more on this below)

- PyArrow: ≥ 15.0

- Java (JDK): 17 or 21 (required for PySpark 4.x — see below)

If you are on macOS or Linux, Error 1 does not affect you. Errors 2 and 3 are cross-platform.

Error 1 — HADOOP_HOME and hadoop.home.dir are unset

What causes it

PySpark on Windows requires a small binary called winutils.exe to manage file system permissions when writing output. On macOS and Linux the operating system handles this natively. On Windows, Hadoop’s shell layer looks for winutils.exe and fails with a FileNotFoundException if it is not found.

This error appears the moment you try to write anything — a Parquet file, a CSV, an ORC file — regardless of whether your Spark computation itself is correct.

# This triggers the error on Windows without winutils

df_enriched.write.mode("overwrite").parquet("../data/output/")

The fix

Step 1. Check your Java version first

PySpark 4.x officially dropped support for Java 8 and requires Java 17 or 21. If you are on an older JDK, upgrade before proceeding — many winutils-related errors are actually caused by an incompatible Java version underneath. See the JDK upgrade steps below.

Step 2. Download winutils

Download the full bin folder from the community repository:

https://github.com/kontext-tech/winutils/tree/master/hadoop-3.4.0-win10-x64/bin

Important: Do not download only

winutils.exeandhadoop.dll. In practice, copying just those two files is often not sufficient. Download the entirebinfolder and replaceC:\hadoop\bin\with its contents. This is the step that resolves the error when everything else has already been tried.

For PySpark 4.x the bundled Hadoop version is 3.3.x, but the hadoop-3.4.0 binaries are stable and widely tested against PySpark 4.

Step 3. Create the folder structure

C:\hadoop\

└── bin\

├── winutils.exe

├── hadoop.dll

└── (all other files from the bin folder)

Step 4. Set the environment variable before any Spark imports

import os

os.environ["HADOOP_HOME"] = r"C:\hadoop"

os.environ["hadoop.home.dir"] = r"C:\hadoop"

These two lines must appear before from pyspark.sql import SparkSession. Once the JVM starts it reads these values once and ignores any later changes.

Why not set it in Windows system variables? You can, and that is the permanent solution. Open PowerShell as Administrator and run:

[System.Environment]::SetEnvironmentVariable("HADOOP_HOME", "C:\hadoop", "Machine")But setting it in code at the top of the notebook is safer for shared environments where the system variable may not be present.

Upgrading to JDK 21

PySpark 4.x requires Java 17 or 21. If you are on Java 8 or 11, upgrade before doing anything else.

Step 1. Download the JDK 21 installer

Go to the Oracle Java Downloads page, select the Windows tab, and download the x64 Installer (.exe).

Step 2. Run the installer

Launch the .exe file and follow the wizard. Note the installation path — it is usually:

C:\Program Files\Java\jdk-21

Step 3. Update your environment variables

Open Settings and search for Edit the system environment variables.

- JAVA_HOME: Under System variables, find

JAVA_HOMEand change its value toC:\Program Files\Java\jdk-21. If it does not exist, click New and add it. - Path: Find the

Pathvariable, click Edit, and delete any old Java entries (e.g.C:\Program Files\Java\jdk1.8.x\bin). Add a new entry:%JAVA_HOME%\bin.

Click OK on all windows to save.

Step 4. Verify

Open a new Command Prompt (this is necessary to refresh the variables) and run:

java -version

Expected output:

java version "21.x.x" ...

Error 2 — ImportError: cannot import name '_builtin_table'

What causes it

This error appears when you run:

import pyspark.pandas as ps

The full traceback points to:

File .../pyspark/pandas/groupby.py:48

from pandas.core.common import _builtin_table

ImportError: cannot import name '_builtin_table' from 'pandas.core.common'

The cause is a Pandas version incompatibility. The _builtin_table attribute was a private internal in pandas.core.common that was removed in Pandas 2.2+. If your environment has Pandas 3.x installed — which is now the default when you run a plain pip install pandas — pyspark.pandas cannot import cleanly.

Check your versions:

import pandas as pd

import pyspark

print(f"Pandas: {pd.__version__}")

print(f"PySpark: {pyspark.__version__}")

If you see Pandas: 3.x.x alongside PySpark: 4.x.x, this is your problem.

The fix

Downgrade Pandas to 2.2.3, which is the latest version that pyspark.pandas in the 4.x line supports:

pip install "pandas==2.2.3"

Restart your kernel after the install, then verify:

import pandas as pd

print(pd.__version__) # should print 2.2.3

Why not upgrade PySpark instead? As of early 2026, no released version of PySpark has updated

pyspark.pandasto be compatible with Pandas 3.x. The_builtin_tabledependency and several other private Pandas internals are still referenced in thepyspark.pandassource. Databricks — the company that maintains Apache Spark — explicitly pinspandas<3in their managed environments for this reason.

If you cannot downgrade Pandas

If your project requires Pandas 3.x for other dependencies, skip pyspark.pandas entirely and use the native Spark DataFrame API instead. It is faster, has no Pandas version dependency, and is what production PySpark pipelines use:

# Instead of pyspark.pandas:

import pyspark.pandas as ps

psdf = ps.read_parquet("data.parquet")

# Use the native API:

from pyspark.sql import SparkSession

spark = SparkSession.builder.appName("app").getOrCreate()

df = spark.read.parquet("data.parquet")

df.show(3)

For small result sets that need Pandas operations, collect after aggregation:

# Collect a small aggregated result to Pandas

result_pd = (

df.groupBy("payment_type")

.agg({"fare_amount": "mean"})

.toPandas() # safe because the result is small

)

Error 3 — PandasAPIOnSparkAdviceWarning: index_col not specified

What causes it

This is a warning, not an error. Your code still runs. But it fires every time you call ps.read_parquet() without specifying index_col:

PandasAPIOnSparkAdviceWarning: If `index_col` is not specified for `read_parquet`,

the default index is attached which can cause additional overhead.

The reason is architectural. pandas-on-Spark is a distributed system — rows live on different executor machines. A Pandas-style integer index (0, 1, 2, …) requires Spark to generate a globally consistent sequence across all partitions, which is an expensive operation. When you do not specify index_col, Spark has to do this work silently, and it warns you that it is doing so.

The fix

Pass index_col=None explicitly to tell Spark you intentionally do not want a sequential index. This both silences the warning and skips the overhead of generating one:

import pyspark.pandas as ps

psdf = ps.read_parquet(

"../data/taxi/yellow_tripdata_2023-01.parquet",

index_col=None

)

print(psdf.shape)

Alternatively, if your data has a natural key column, specify it as the index:

psdf = ps.read_parquet(

"../data/taxi/yellow_tripdata_2023-01.parquet",

index_col="tpep_pickup_datetime"

)

To silence all PandasAPIOnSparkAdviceWarning warnings globally for a session:

import warnings

from pyspark.pandas.utils import PandasAPIOnSparkAdviceWarning

warnings.filterwarnings("ignore", category=PandasAPIOnSparkAdviceWarning)

The Complete Setup Cell

Put this at the very top of every PySpark notebook on Windows. It handles all three issues at once:

# ── Environment setup — must run before any other imports ────────────

import os

import warnings

# Fix 1: winutils path (Windows only)

os.environ["HADOOP_HOME"] = r"C:\hadoop"

os.environ["hadoop.home.dir"] = r"C:\hadoop"

# Fix 2 (partial): suppress PyArrow timezone warning

os.environ["PYARROW_IGNORE_TIMEZONE"] = "1"

# Fix 3: suppress pandas-on-Spark advisory warnings

import warnings

from pyspark.pandas.utils import PandasAPIOnSparkAdviceWarning

warnings.filterwarnings("ignore", category=PandasAPIOnSparkAdviceWarning)

# ── Imports ──────────────────────────────────────────────────────────

from pyspark.sql import SparkSession

from pyspark.sql.functions import (

col, round, when, unix_timestamp,

avg, count, stddev,

sum as spark_sum,

hour, month, broadcast

)

import pyspark.sql.functions as F

import pyspark.pandas as ps # requires pandas==2.2.3

# ── Session ──────────────────────────────────────────────────────────

spark = (

SparkSession.builder

.appName("My PySpark App")

.config("spark.driver.memory", "4g")

.getOrCreate()

)

# ── Verify ───────────────────────────────────────────────────────────

import pandas as pd

print(f"Spark: {spark.version}")

print(f"PySpark: {__import__('pyspark').__version__}")

print(f"Pandas: {pd.__version__}")

# ── Load data ────────────────────────────────────────────────────────

psdf = ps.read_parquet(

"../data/taxi/yellow_tripdata_2023-01.parquet",

index_col=None # Fix 3: suppress index overhead warning

)

print(f"Shape: {psdf.shape}")

Expected output:

Spark: 4.1.1

PySpark: 4.1.1

Pandas: 2.2.3

Shape: (3066766, 19)

Version Compatibility Reference

| Component | Required | Notes |

|---|---|---|

| PySpark | 4.x | Tested on 4.1.1 |

| Pandas | 2.2.3 | 3.x breaks pyspark.pandas |

| PyArrow | ≥ 15.0 | Set PYARROW_IGNORE_TIMEZONE=1 |

| Python | 3.10–3.12 | 3.13 untested |

| Java (JDK) | 17 or 21 | PySpark 4.x dropped Java 8 support |

| winutils | hadoop-3.4.0 | Windows only; use the full bin folder |

Pin these in your requirements.txt:

pyspark==4.1.1

pandas==2.2.3

pyarrow>=15.0.0

numpy>=1.26

Quick Reference

| Error | Root cause | Fix |

|---|---|---|

HADOOP_HOME and hadoop.home.dir are unset | winutils.exe missing or incomplete | Download full bin folder from hadoop-3.4.0, set HADOOP_HOME |

ImportError: cannot import name '_builtin_table' | Pandas 3.x removed private API | pip install "pandas==2.2.3" |

PandasAPIOnSparkAdviceWarning: index_col not specified | No index column → overhead | Pass index_col=None to ps.read_parquet() |

Errors persist despite correct HADOOP_HOME | Incompatible JDK version | Upgrade to JDK 21 and update JAVA_HOME |

Summary

None of these errors indicate a broken Spark installation. They are all configuration or dependency version problems with deterministic fixes. The pattern is:

- Upgrade to JDK 21 first. PySpark 4.x requires Java 17 or 21. An incompatible Java version causes errors that can look like

winutilsor Hadoop problems. - Install the full

binfolder. Downloading onlywinutils.exeandhadoop.dllis often not enough — replace the entireC:\hadoop\bin\directory. - Set

HADOOP_HOMEbefore the JVM starts. Two lines, placed before any PySpark import. - Pin Pandas at 2.2.3.

pyspark.pandashas not caught up with Pandas 3.x yet. - Pass

index_col=Noneto everyps.read_parquet()call. One argument, zero warnings.

The complete setup cell above handles all of this. Copy it into your notebook template and you will not see any of these errors again.

Tags: PySpark, Python, Data Engineering, Windows, Pandas

Hi, this is a comment.

To get started with moderating, editing, and deleting comments, please visit the Comments screen in the dashboard.

Commenter avatars come from Gravatar.